Using Airflow Connections

In any connector page, you might have seen an example on how to build a DAG to run the ingestion with Airflow (e.g., Athena). A possible approach to retrieving sensitive information from Airflow would be using Airflow’s Connections. Note that these connections can be stored as environment variables, to Airflow’s underlying DB or to multiple external services such as Hashicorp Vault. Note that for external systems, you’ll need to provide the necessary package and configure the Secrets Backend. The best way to choose how to store these credentials is to go through Airflow’s docs.Example

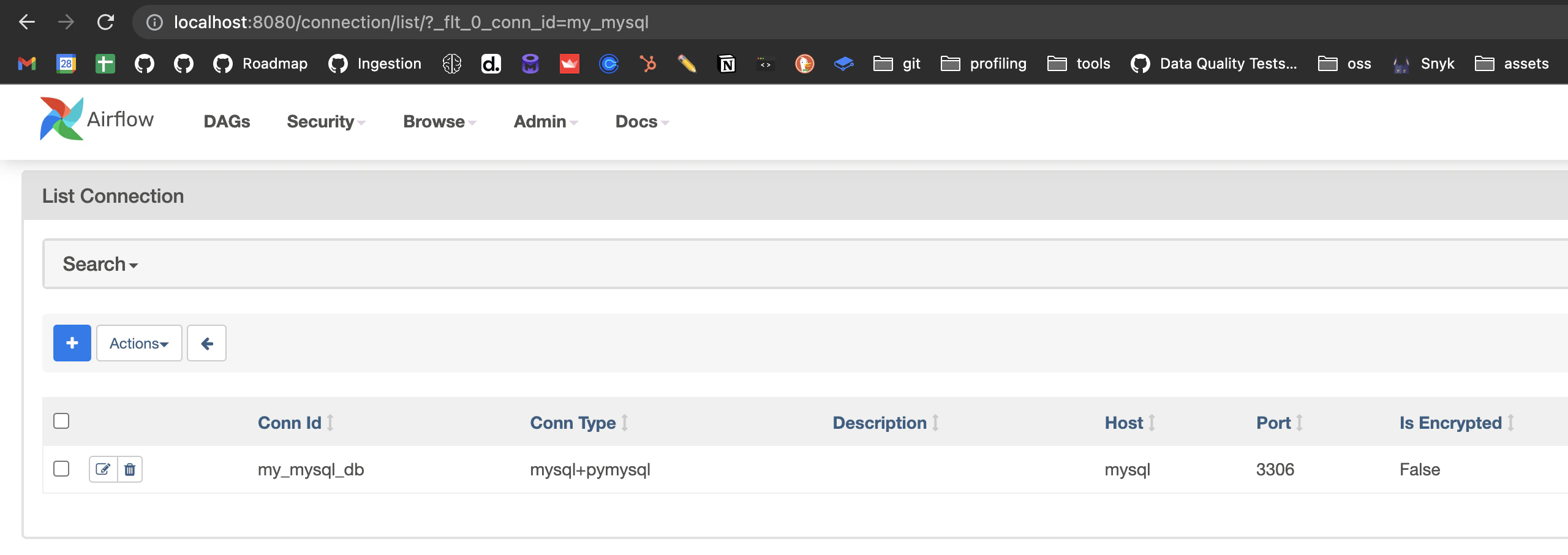

Let’s go over an example on how to create a connection to extract data from MySQL and how a DAG would look like afterwards.Step 1 - Create the Connection

From our Airflow host, (e.g.,docker exec -it openmetadata_ingestion bash if testing in Docker), you can run:

Step 2 - Understanding the shape of a Connection

In the same host, we can open a Python shell to explore the Connection object with some more details. To do so, we first need to pick up the connection from Airflow. We will use theBaseHook for that as the connection is not stored

in any external system.

Step 3 - Write the DAG

A full example on how to write a DAG to ingest data from our Connection can look like this:Option B - Reuse an existing Service

As explained in the Managing Credentials guide, once a service exists in OpenMetadata its connection details are stored and can be reused — just omit theserviceConnection YAML entries in your DAG: